LambdaSpy - Implanting the Lambda execution environment (Part two)

In part one of this series, we discussed the AWS Lambda execution environment. In this post, we focus on the challenge of persisting in a serverless environment, gaining access to the data that Lambda functions process, and one possible method in the form of a Lambda implant.

As we develop new capabilities to protect our customers, we regularly deep-dive into relevant subsystems to understand as deeply as possible how attackers are or might compromise, maintain persistent access, and steal data from an environment.

Malware in the cloud

Recently, attackers made headlines with "Denonia", the first publicly-known piece of malware deployed as a Lambda function. This malware is a cryptocurrency miner deployed by attackers as a Lambda function that ran within customers environments using stolen or leaked AWS credentials. Once deployed, the miner runs at the infected customer’s expense to mine cryptocurrency.

Malware within cloud computing environments isn’t new. Malware has been found on infected EC2 instances in the past and is often deployed with the sole purpose of mining cryptocurrency for the attackers. In fact, this past July, Amazon augmented GuardDuty with traditional malware scanning capabilities.

But what if an attacker wanted to accomplish more than simply mining cryptocurrency in a compromised serverless environment? As more organizations move toward serverless solutions, attackers must also change to compromise and control these environments. Let’s explore what an attacker might do.

Persisting in a Lambda environment

Historically, traditional endpoint-based malware installs as a service, a kernel module, an injected library, or as a startup script or executable. These techniques generally do not work within a Lambda environment. If a Lambda function is compromised via a code exploit, malware will only survive until a shutdown event occurs. Shutdown events occur if no invocation events take place during a period of time or if the function’s configuration is updated. During a shutdown event, the entire execution environment is torn down and wiped. This presents significant challenges to an adversary attempting to persist in this environment.

One way an attacker could persist is to directly modify and re-deploy a Lambda function. This would likely require a deep understanding of deployed code to keep it functioning correctly while persisting in the environment. This becomes even more unrealistic if the targeted function uses a custom runtime. In addition, most functions are updated automatically by a CI/CD process, further complicating persistence.

Lambda extensions, however, offer an additional method to attach to an existing Lambda function and persist across all future invocations. As discussed in part one of this series, extensions allow AWS customers to attach arbitrary code to existing serverless applications. Additionally, external extensions can operate completely independently from whatever runtime is installed.

Extensions as malware

External Lambda extensions offer a potential mechanism for attackers looking to infect existing serverless applications within AWS. By design, extensions can be attached to already deployed functions with no code modifications and can immediately begin interacting with the existing runtime. The extension publisher makes the Layer public and its ARN known, enabling anyone to attach that extension to their Lambda. Layers can be deployed into any AWS account and served to any other account. Once attached, a malicious extension could provide an attacker with persistent unfettered access to any activity within an execution environment.

There are several ways an attacker could attach a malicious extension to a Lambda function. An attacker could use stolen AWS credentials to attach an extension to arbitrary Lambdas, or an extension might be attached as part of an Infrastructure as Code (IaC) deployment. In a worst-case scenario, an existing extension could have a backdoor inserted that could create a novel supply chain attack where all users of the affected extension would be impacted.

Gaining access to Lambda events

Regardless of how our malicious extension is attached to a target Lambda function, what methods are available to access data processed by Lambda functions? For example, let’s say an attacker wishes to do more than vanilla crypto mining. Perhaps they want to capture all customer data flowing into a function. This could include information such as customer IP addresses, credit card data, or other PII. Basic Lambda implementation details prevent extensions from doing this so let’s dig a little deeper.

When Lambdas are invoked, the runtime is responsible for querying the Rapid API to receive the event data associated with the invocation. (see part one of this series for more details) This event data is the raw data passed into the Lambda to operate on and may include login details or IP addresses if the request originated from a service such as API Gateway.

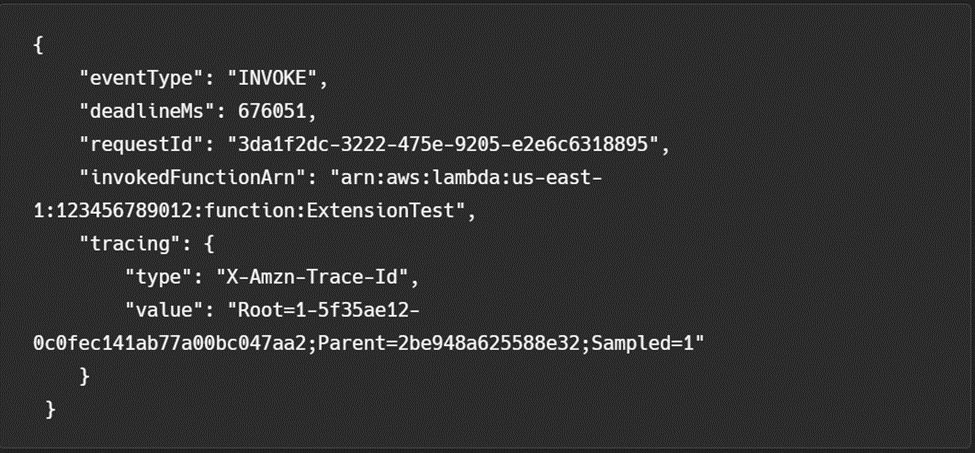

As discussed in part one of this series, Lambda extensions can subscribe to these invocation events; however, AWS does not expose the raw data to the extension. Figure 1 below shows the event contents that a typical Lambda extension receives.

Notice that the JSON object in Figure 1 contains metadata related to the invocation event - no customer data is included. Gaining access to the event body outside the function handler without interruption appears to be extremely difficult by design. In fact, Amazon specifically calls this out in their documentation by saying “Extensions that want to access the function event body can use an in-runtime SDK that communicates with the extension. Function developers use the in-runtime SDK to send the payload to the extension when the function is invoked.”

Therefore, the supported way for extensions to access the raw event data is dependent on the Lambda function author exposing an SDK that exposes the event data directly. Let’s keep looking for a method that might work.

Hooking activity within an execution environment

Since there is no supported way for an extension to access raw event data, let’s refer back to the Lambda internals described in our first post of this series. Recall that the entire execution environment relies on local interprocess communication (IPC) in the form of an HTTP endpoint served by Rapid. If a malicious extension was able to man-in-the-middle requests sent to Rapid, the extension could gain full control over the entire execution environment. Not only would this provide full control over data sent to and from the customer Lambda code, but since other extensions rely on this same endpoint, the extension could control other extensions as well.

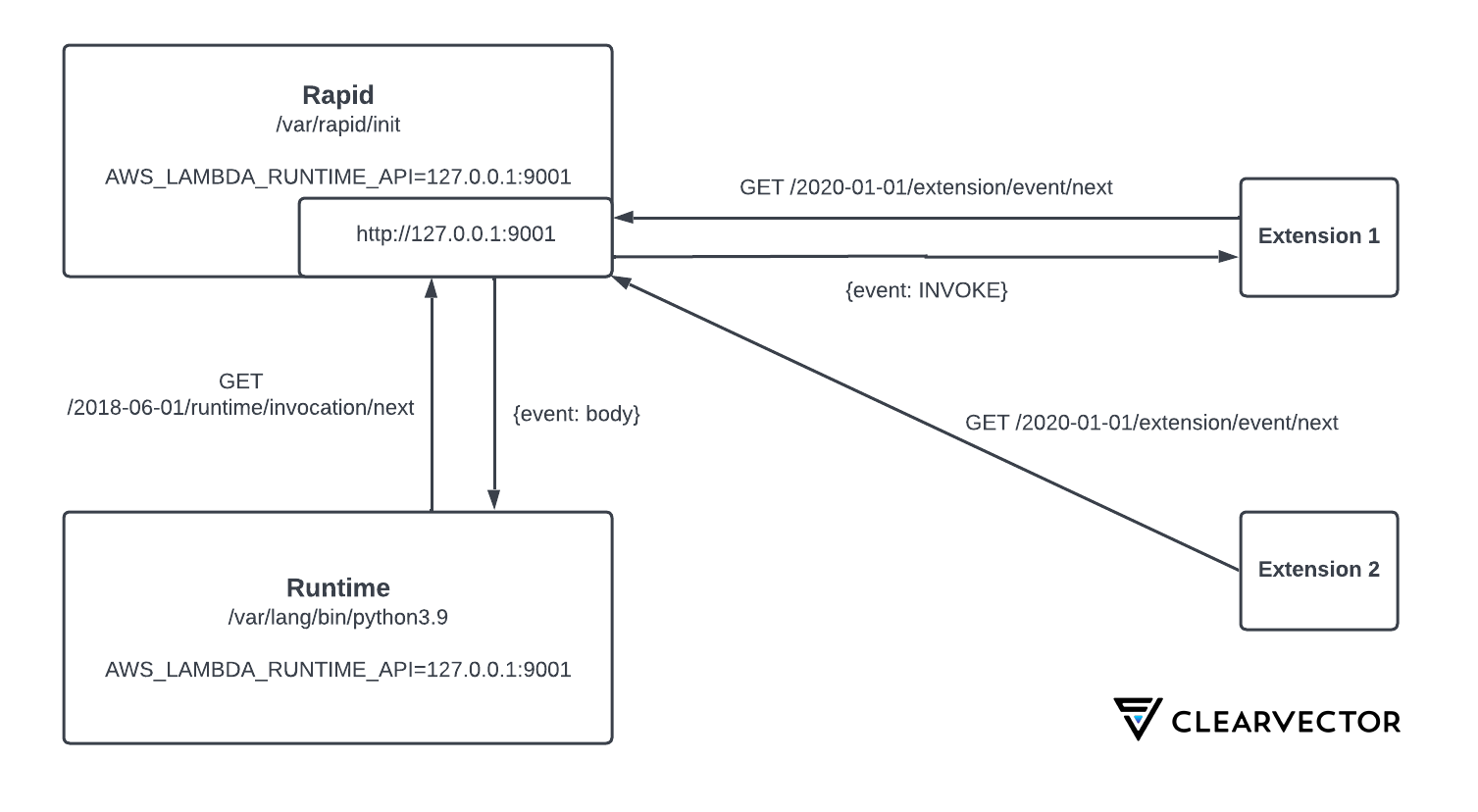

As shown in Figure 2, Rapid listens for all API requests on http://127.0.0.1:9001. Lambda extensions are loaded and executed before any runtime code, but after Rapid. Figure 2 illustrates the normal interactions between a Python Lambda function and two external extensions.

In this example, the Python process as well as the extensions are child processes of Rapid (PID 1). As expected, the child processes inherit the relevant environment variables declared within the init process. In the first post of this series, we mentioned that environment variables drive almost everything within the runtime. The variable named “AWS_LAMBDA_RUNTIME_API” informs the child runtime processes and other extensions of the IP and port number of the Rapid API.

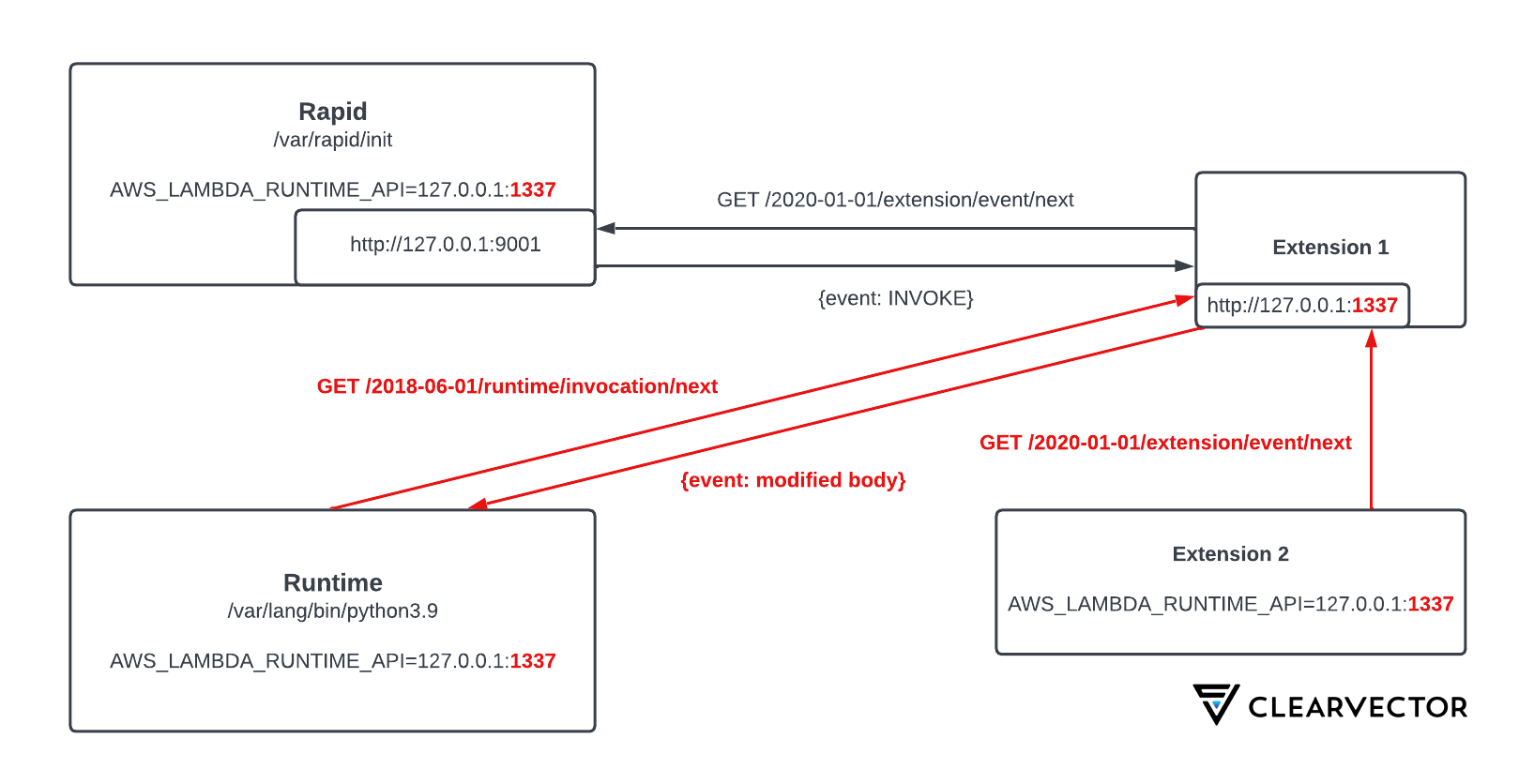

Overwriting this environment variable with a port number that we control will allow us to man-in-the-middle all activity within the Lambda runtime. But how can we do this? In the first post of this series, we discussed how the default Linux kernel in the Lambda runtime environment is compiled with “process_vm_readv” and “process_vm_writev” system calls. In addition, all processes run with the same user ID. This means that our extension has full read and write access to Rapid’s heap memory, by design.

To test this, we implemented a proof-of-concept that scans Rapid’s heap memory and overwrites this environment variable. Once overwritten, all new processes will use this port number to send and receive events. Figure 3 shows how this modification impacts all event flows within the execution environment.

Since extensions execute before any runtime code, the updated environment variable will take effect when the runtime process is executed (Python, Java, Node, Ruby, etc). In addition, any extensions that are loaded after ours, and use this variable, will be proxied through our extension as well. This could allow malware to completely disable security products or logging extensions from within the runtime itself.

Let's see if we can capture and modify raw event body data used during Lambda function invocations. AWS provides a “hello world” example Lambda function which we will use demonstrate basic Lambda executions. This function, by default, simply prints the invocation event and exits.

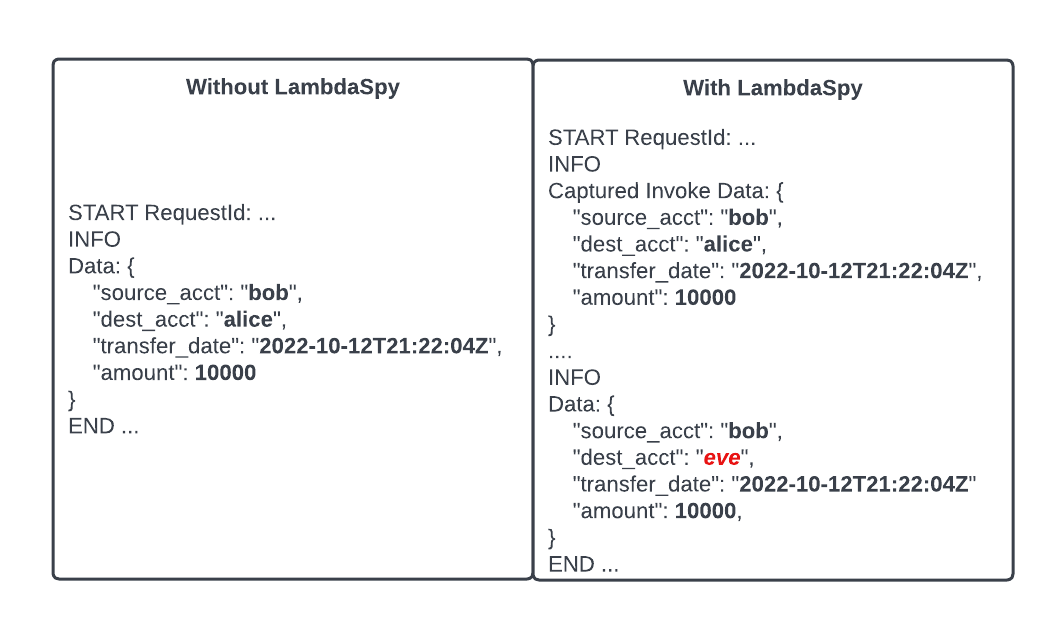

For this example, imagine a serverless banking application that transfers funds between accounts. A user named Bob wants to transfer funds to another user, Alice. The left side of Figure 4 shows what a $10,000 transaction might look like. Now, imagine an attacker named Eve, attached a malicious Lambda extension in order to re-route transfers to their attacker-controlled account. The right side of Figure 4 shows that Eve’s malicious extension intercepted the request and modified the “dest_acct” field from “alice” to “bob”. Malware that can man-in-the-middle requests sent between the Rapid API and the runtime code can potentially gain full control of a serverless application without any code modification.

Introducing LambdaSpy

LambdaSpy is a proof-of-concept Lambda external extension that demonstrates the techniques used in this post to inspect and modify raw event data. LambdaSpy is written in Rust, is compatible with most runtimes, and works with both AMD64 and ARM64 architectures. Check it out here. Although LambdaSpy is discussed in the context of a malicious extension, we believe this has applicability for fuzzing and testing of Lambda applications as part of a normal SDLC.

Mitigating LambdaSpy and similar attacks

In addition to best practices for Lambda development and governance, ClearVector recommends:

- Using policies (IAM/SCP) to control who can attach Lambda extensions. For example, limit access to the UpdateFunctionConfiguration API.

- Using code signing and profiles (AWS Signer) to prevent the introduction of unintended, untrusted extensions from being attached. For example, this limits risk in the case of compromised developer or CI/CD credentials.

- Auditing existing extensions to confirm provenance and acceptable use.

We plan to make a dedicated post on this topic in the future.

In addition, after performing this research, ClearVector worked with the AWS security and AWS Lambda teams to understand and determine the current design decisions and security boundaries for Lambda extensions. We look forward to working with AWS in the future to help AWS customers understand the risk associated with Lambda extensions, and discuss possible architectural improvements to the Lambda sandbox to reduce the risk and impact of 3rd party or malicious Lambda extensions.

Conclusion

In this post, we discussed the challenge of persisting in a serverless environment, and demonstrated how an attacker might intercept, modify, or deny all activity that occurs within a Lambda execution environment. In addition, we explored ways to persist indefinitely in the Lambda execution environment. Finally, we released proof-of-concept code, LambdaSpy, and provided mitigation strategies.

This is only one avenue an attacker could potentially use to compromise a Lambda execution environment. There are many other environment variables that could be modified to directly influence the execution environment of Lambda. As the cloud computing landscape changes, attackers will be forced to adapt along with it.